Solutions

goals

Resources

Resources

Strategies, metrics, and plays that work.

User engagement is one of those terms that feels universally understood, until someone asks "how engaged are our users?" and the answers stop being satisfying. You look at logins, sessions, and feature clicks and get a dashboard that looks busy and important but doesn't explain what's really happening.

This guide cuts through the noise. Whether you're a product manager, lifecycle marketer, or customer success leader, you'll find a practical framework for diagnosing engagement problems, a full library of user engagement strategies in play format, and the key metrics that tell you whether any of it is working.

Want to skip straight to engagement plays? Click here.

User engagement is defined by how actively involved and interested users are in your software.

It refers to the level of interaction and response that users have with the content and features of the software, and can be measured by metrics like the number of clicks, likes, shares, comments, or time spent.

More precisely, user engagement is about progress, not presence. It's how many users reach meaningful outcomes, understand why those outcomes matter, return to achieve them again, and deepen their usage over time.

As Lincoln Murphy puts it: "Engagement is when your customer is realizing value from your SaaS."

The user engagement rate represents the percentage of users who remain active within your product over a defined period of time. Tracking how many users remain truly engaged with your product is a great indicator of overall product health, and any changes in this metric can be a leading indicator of problems down the road.

User Engagement Rate = (Users who performed an engagement action ÷ Total eligible users) × 100

The main challenge is that the definition of "active" differs between products. A social media automation tool might have users logging in every day; a budgeting tool might see monthly logins and still count that as high user engagement. What matters is whether users are repeatedly getting value, not just showing up.

These two terms are often used interchangeably, but they refer to separate concepts:

User engagement focuses on what happens inside the product: how users learn, discover value, and build habits.

Customer engagement is typically high-touch and relationship-driven, covering onboarding calls, QBRs, and proactive check-ins.

Here’s a table breakdown of what each category is made up of.

User engagement | Customer engagement |

|---|---|

How users learn as they go | Onboarding calls |

How they discover value on their own | Training sessions |

How they build habits | QBRs |

How the product guides them without a human in the loop | Proactive check-ins |

One-to-one guidance |

When teams blur these two, engagement strategy gets murky. If usage only improves when customer success intervenes, what you have is a manual safety net, not a scalable user engagement strategy.

Strong teams treat both as complementary, reinforcing one another to create a comprehensive view of customer health.

Active and engaged users are the foundation of every successful SaaS company. Subscription revenue is generated over months or even years.

Some healthy SaaS companies take upwards of 5 to 7 months to start generating positive revenue, which makes it critical that users continue engaging with your product for as long as possible.

Companies use user engagement strategies to create more loyal customers with healthy, long-term usage habits.

A disengaged user rarely touches your product and will inevitably evaluate whether it's worth keeping during any cost review.

Research shows 36.5% of consumers would spend more on a product from a brand they were loyal to, making customer satisfaction a direct revenue driver.

More engaged users stay longer, giving your team more opportunities to upsell and renew.

Research shows 58% of respondents would pay more for a better customer experience.

It's roughly twice as cheap to upsell an existing customer as to acquire a new one, making expansion revenue one of the most powerful levers for sustainable SaaS growth.

Retention tells you what's already happened; user engagement is a leading indicator. When engagement is healthy, you see stronger retention, steadier feature adoption, fewer reactive support conversations, and more natural expansion.

When it's weak, symptoms show up downstream: users activate once and disappear; feature launches land to silence; churn feels sudden.

Most engagement problems don't start with lazy users or a broken product. They come from a small set of recurring patterns that are worth naming directly:

Before reaching for a strategy, it helps to know where momentum is actually breaking. Three barriers consistently keep engagement teams stuck, regardless of company size or product category.

Teams that own engagement metrics often can't access the product usage data required to personalize experiences.

A lifecycle marketer may know exactly which feature would help a specific customer segment succeed, but getting the list of users who fit that profile requires multiple requests across departments and takes weeks to fulfill. By then, some of those users have already churned.

Teams who've solved this data access problem report 40% higher confidence in hitting their engagement goals.

Most teams know they should deliver timely in-app guidance based on user behavior. In practice, they're stuck answering support tickets and sending reactive check-ins rather than anticipating what users need before they get stuck.

When a user signals readiness to adopt a new feature, that window is minutes, not days.

Teams that time their touchpoints based on user behavior are twice as likely to achieve their engagement goals.

62% of SaaS teams use three or more tools to execute their engagement programs, and teams in that situation were 45% more likely to report underperforming on engagement KPIs. The issue isn't the number of tools; it's that none of them were built to work together, so the experience users receive is fragmented even when the team's intentions aren't.

Chrissy Quiñones, Digital Customer Success Program Manager at Fullstory, described what it looked like to break through these barriers after consolidating her team's engagement tooling: "We're saying, 'Hey, we know you are a product manager, and this is a use case that we think is going to help you.' Here's a CTA that is sending you directly into our app." The result: a 3.1% increase in activation rate, email open rates up to 35%, and click rates up to 5.2%.

Once you know which problem you're dealing with, the next question is which moments in the user journey deserve a response. Not every action warrants one. High-impact moments to target include:

Basic navigation, regular log-ins, standard page views, and routine actions don't warrant intervention. Responding to them creates noise that trains users to ignore your guidance altogether.

To find your product's high-impact moments: analyze which specific actions correlate with long-term retention, review support tickets and NPS comments for friction points, interview power users about what "unlocked" the product's value for them, and track where users drop off most consistently.

Warning signs you're missing key moments: users churning right after signup, feature adoption plateaus, support tickets about discoverable features, and drop-offs at predictable points in the user journey.

To increase user engagement, you first need to measure user engagement accurately. Most teams track what's easy, not what's meaningful. Here are the engagement metrics that count:

The most critical user engagement metric.

A strong activation event reflects a real "aha moment," not just account creation.

Examples: completing a core workflow, creating something useful, inviting a teammate, or seeing a meaningful result.

If activation is low, engagement plays earlier in the journey aren’t doing enough to guide users to the right actions.

If activation is high but retention is low, users may be reaching value once, but not seeing why they should return.

Activation rate is usually pulled from signup-to-activation funnels that show how many new users reach your defined “first value” moment.

Activation Rate Equation:

Activation Rate = (Number of users who reach activation ÷ Total new users) × 100

Average activation rate: 32%

What it tells you

This engagement metric is a useful measure of habitual usage and overall product stickiness.

Monthly active users and daily active users together reveal patterns that single-session metrics can't.

A healthy DAU/MAU ratio for B2B SaaS products is typically 15–25%.

DAU/MAU ratio is typically calculated in your product analytics platform by comparing unique daily active users against unique monthly active users over the same rolling period.

A rising DAU/MAU ratio doesn't automatically signal healthy engagement. A ratio that looks strong in one product category may indicate underperformance in another, so benchmark against your own historical trend rather than industry averages alone.

DAU/MAU Ratio Equation:

Feedback Participation Rate = (Users who submit feedback ÷ Users who were eligible to give feedback) × 100

What it tells you

Whether value is repeatable.

This measure of user engagement predicts retention more reliably than login frequency alone.

Return usage asks:

• Do users come back on their own?

• Do they repeat the action that mattered?

If users activate but don’t return, focus on Repeat Value plays before adding re-engagement campaigns.

Messaging can encourage a return. Only the product can make it stick.

Return usage is most often reviewed in retention or cohort views that show whether users repeat a core action after their initial success.

Return Usage Rate Equation

Return Usage Rate = (Users who repeat a core action within 7 days ÷ Users who activated) × 100

You can adjust the time window based on your product (daily, weekly, monthly).

What it tells you

How long it takes users to experience their first win.

Effective onboarding should aim to help users reach their first meaningful outcome, their "aha moment," within 24 hours for product-led growth. Shortening time to value often has more impact than adding new engagement tactics downstream.

Look for friction you can remove, delay, or simplify.

Shortening time to value often has more impact than adding new engagement tactics. If users get value faster, many engagement problems resolve themselves.

Time to value is calculated by comparing signup timestamps with activation events, often surfaced in cohort or lifecycle timing analyses.

Time to Value Equation:

Time to Value = Timestamp of activation - Timestamp of signup

Average time to value: 38 days

Most engagement problems don't start with lazy users or a broken product. They come from a small set of recurring patterns that are worth naming directly:

Most SaaS products lose more than 75% of new users within the first week. Here are six proven ways to increase user engagement and fuel growth:

Use this play when:

Users are signing up but not reaching activation, or dropping off before they experience your product's core value.

User onboarding has a powerful ripple effect on the entire customer journey and customer experience. Since user motivation is typically highest during onboarding, it's the best opportunity to get users to accomplish meaningful actions and reach their first meaningful outcome. Walk each new user through key features of your product with the goal of helping them find your product's core value proposition as quickly as possible.

Duolingo does this brilliantly. Their onboarding flow guides new users through a quick translation exercise, demonstrating value before asking for a signup. By the time users see a registration screen, they've already made progress toward their goal, because humans have an inherent completion bias.

Personalized onboarding experiences tailored to individual user needs can lead to 25–40% higher activation rates compared to generic flows.

If users activate but don’t come back on their own, your repeat value isn’t clear yet. Messaging may drive spikes, but the most real and reliable indicators are sustained active users.

After a user succeeds once, the product often goes quiet. The experience feels “done,” so teams try to restart momentum later with emails or reminders.

That works sometimes. But it doesn’t last.

Look closely at what happens right after a user succeeds. If the product doesn’t clearly point to what comes next, users won’t find a reason to return.

If you can’t answer “what’s the next useful thing?” inside the product, engagement will stay unpredictable.

You’ll know it’s working when:

Users reach their first meaningful outcome faster and return to the product without being prompted.

Use this play when

Users are completing individual steps but not advancing through the product or taking the next logical action.

The microcopy throughout your product, including CTAs, form descriptions, and modal dialogs, can encourage users to complete actions, explain technical details in plain language, and keep users engaged over time.

Effective copy can also guide trial users toward upgrading.

Mailchimp is a prime example: personalized welcome text, short feature descriptions, and clear CTAs that grab users' attention on every login.

This play exists to reduce doubt. It not only keeps users engaged, but answers: am I on the right track?

Progress should help users feel closer to what they’re trying to achieve; make it clear through your writing.

If finishing a step doesn’t make someone feel closer to their goal, it isn’t pulling its weight.

You’ll know it’s working when

Click-through rates on key CTAs improve and trial-to-paid conversion increases.

Use this play when

Active users are engaged with the basics but feature adoption has plateaued and users aren't discovering higher-value capabilities on their own.

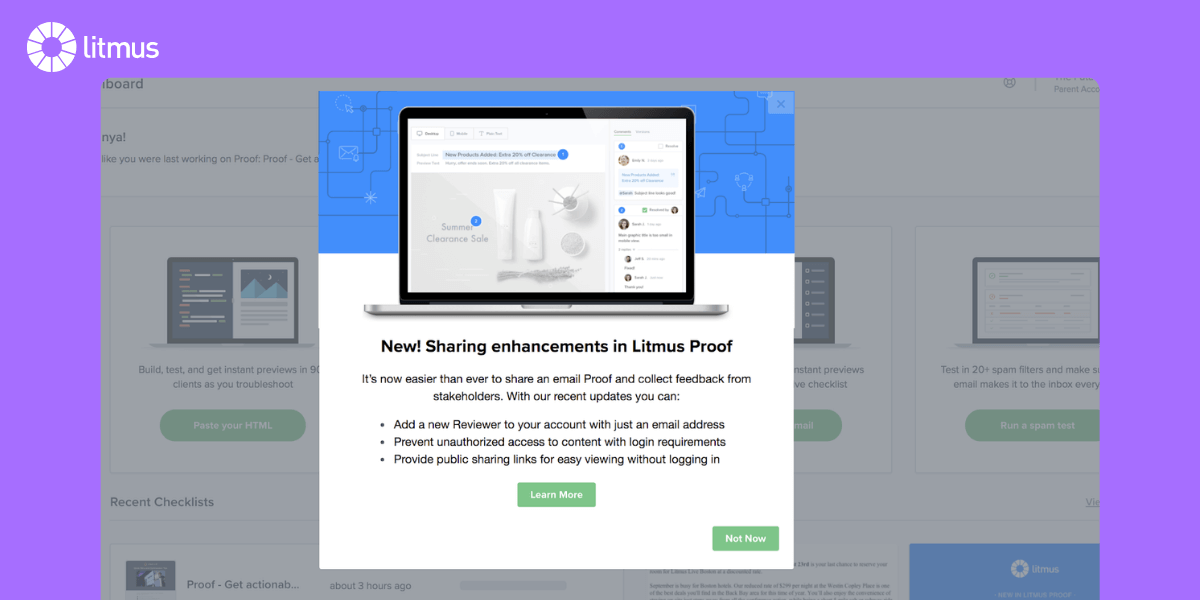

New features don't drive feature adoption if users don't know they exist. In-app guidance is among the most effective user engagement strategies for helping active users discover and adopt your product's features. Using interactive product walkthroughs instead of static help documentation can significantly improve user engagement, with completion rates of 40–60% compared to just 10–20% for static content.

When Litmus used Appcues to create a simple tooltip for their Process HTML feature, 62% of users who saw it became active users of that feature, compared to only 2% in the control group, a 22X increase in feature adoption. Treat every feature launch like a miniature product launch.

Good guidance is quiet and specific.

It shows up when someone hesitates, helps them take action, and then gets out of the way.

If engaged users are ignoring guidance, there’s probably too much of it or it’s showing up at the wrong time.

You’ll know it’s working when

Feature adoption rates climb after in-app guidance goes live and users discover capabilities without filing support tickets.

Use this play when

Users go quiet between sessions and need a timely reason to return that's relevant to what they were doing in the product.

What users feel after the play:

“That would’ve helped me earlier.”

User engagement happens outside your product too. Setting certain events to trigger a timely email reinforces in-app guidance through another channel, making it more likely that users will take action.

These marketing strategies help guide users through your product's features and ensure that every action is followed by a logical next step.

You’ll know it’s working when

Return usage improves and users advance through the product between sessions without manual outreach.

Use this play when

You can see where users drop off in your analytics but can't explain why, and support tickets keep pointing to the same friction points.

Quantitative analytics tools tell you what isn't working. Qualitative user feedback tells you why. Collecting feedback from live chat tools, user surveys, NPS scores, and session recordings provides valuable insights about how to better engage active users within your product.

Actively soliciting user feedback and demonstrating that changes have been made based on that feedback can also strengthen customer relationships and increase retention.

You’ll know it’s working when

The friction points you uncover get resolved before they show up in churn data, and user satisfaction scores improve over time.

Use this play when

Users seem overwhelmed by the product's scope, struggle to find their way to core value, or your own team has lost clarity on what your product is really for.

Rolling out too many features that don't add value creates product debt and leads users to believe you've lost focus on the core value that made them sign up. If analytics show that customers aren't using a feature, either invest to improve it or cut it.

When Moz diversified outside their core SEO focus, it led to significant revenue losses and layoffs. After returning to their core in 2017, Moz returned to profitability and is back to being one of the leading SEO tools in the industry. Fewer options help users focus faster on your product's core value.

You’ll know it’s working when

Users navigate to core value faster, time to value decreases, and the product feels more focused to new customers.

Personalization shouldn't stop at onboarding.

At every lifecycle stage, users interact with your product through the lens of their own goals. Segmenting customers based on behavior, demographics, or other criteria allows for more effective engagement strategies that speak directly to each group's needs.

The best apps use personalization to create an intuitive user experience at every step.

Customer feedback surveys like CES, CSAT, and NPS are great temperature checks, but moving them in-app makes them dramatically more useful. In-app surveys let you target the right user at the right moment, collect feedback with more contextual accuracy, and improve user satisfaction by showing users that their experience is actively shaped by their responses.

PatientSky used Appcues to gather customer feedback in-app, dramatically improving the quality and actionability of what they learned. Collect feedback after key workflows, then use that user feedback to inform what you build next.

Engagement in the early days plants seeds for long-term retention. Acknowledge your customers' milestones both in-app and through email. Spotify's famous Wrapped campaign recaps a user's listening habits from the past year, serving simultaneously as an engagement tool and a reminder of the product's value. These moments build loyal customers who feel seen and invested in the relationship.

Your most engaged users want to align with your brand. Involve them in feature launches, ask for beta feedback, and showcase their success stories.

Active, two-way conversations foster online community engagement through consistent, valuable content. User-generated content like reviews and photos builds community and trust, while showcasing member spotlights and user-created posts increases loyalty.

On social media, polls, quizzes, and open-ended questions encourage participation and keep users engaged with your brand even outside the product.

Don't limit your ability to help customers to what they can do inside the product.

Build a library of interesting content, including blogs, ebooks, tutorials, use cases, and industry reports, that contextualizes your product and helps customers grapple with high-level problems.

Relevant content builds another layer of trust, and valuable content that doesn't read as a product ad positions you as a partner rather than a vendor. Become a resource, and customers will return to you beyond their transactional needs.

Most teams still operate in silos: in-app messages managed by one team, emails owned by another, user data locked away from anyone who needs it.

Meanwhile, users experience all of it as one journey. Here's how we see ownership playingn out:

Play | Primary lead | Key partners | Review trigger | Watch for |

|---|---|---|---|---|

Repeat Value | Product | CS, Customer Marketing | Workflow changes, retention dips | Return usage depends on reminders |

Progress & Momentum | Product | CS, UX | Activation or drop-off reviews | Steps completed without confidence |

Contextual Guidance | Product | Support, CS | Repeated friction or support themes | Help becoming background noise |

Feature Discovery | Product, Product Marketing | Customer Marketing | Feature releases, adoption stalls | Discovery driven by launch timing |

Re-engagement | Customer marketing | Product, CS | Inactivity spikes, trial drop-off | Messaging compensating for experience gaps |

Clear ownership helps prevent gaps, but engagement work rarely succeeds when it’s treated as a series of handoffs.

Most engagement problems sit at the intersection of Product, Customer Success, and Customer Marketing. Product sees behavior, CS hears friction, and Marketing reinforces direction but improvement stalls when each team works from a different diagnosis.

Strong collaboration starts by aligning on what’s breaking in the user experience, not on which team should act.

Before choosing a play or launching changes, teams should agree on:

When those answers are shared, ownership becomes an accelerator instead of a boundary.

A simple way to collaborate without adding a heavy process is to anchor discussions around behavior, not solutions.

When reviewing engagement, ask together:

These questions help teams converge on the same problem, even if they contribute in different ways.

When teams surface several engagement problems at once, prioritization often turns into a debate about urgency or ownership.

Instead of ranking ideas, prioritize problems using these questions:

The goal isn’t to solve everything. It’s to choose the issue that, once addressed, makes the rest easier to reason about.

Collaboration tends to break down when:

These are usually signals that prioritization happened too late or not at all.

Knowing which plays to run is only half the challenge. Most teams get stuck in planning paralysis, waiting for perfect data, a perfect plan, or a perfect moment. Here's how to get moving.

Most teams craft strong individual messages but miss how those pieces work together. Users experience in-app messages, emails, and push notifications as one journey, not three separate campaigns. Connected experiences, where each touchpoint is triggered by user behavior and feeds into the next, drive 1.7x higher customer retention than siloed messaging.

The most effective teams start with the user moment: ask what a user needs right after they complete a key action, and use that answer to determine which channel and message makes sense. A well-designed connected flow has four components:

Three proven starting flows for teams new to scaled user engagement:

These aren't abstract engagement problems. Each is a specific breakdown in momentum. Good user engagement strategies should be built around fixing these moments, not around adding more activity everywhere.

Onboarding completion flow: Guide new users through initial setup with an in-app welcome tour, email reminders for incomplete steps, and celebration messages at key milestones. The clear metric (completion rate) and defined audience (new users) make this the ideal first project.

Feature adoption campaign: Choose an underutilized but high-value feature and build a connected flow with an email announcement, in-app guidance when users are in the right context, and follow-up with success stories from users who've benefited. Feature adoption campaigns often produce quick wins within a few weeks.

Re-engagement sequence: Target users who haven't logged in recently with a coordinated campaign: a personalized email highlighting what they're missing, a follow-up with a specific action to take, and a smooth in-app experience when they return.

As a rule, anything that needs to happen for most users should live in the product itself. That includes showing what to do next, helping users recover when they get stuck, and reinforcing progress toward meaningful outcomes.

Out of app messaging works best when it can invite users back or highlight changes. But it has its downsides: it struggles when asked to explain value or guide core behavior on its own. Using out of app messaging for the sake of it, without basing its use on what people are or aren’t doing in your product, creates a delta.

If engagement depends heavily on external messaging, that's usually a signal that the product experience needs attention and should be carrying more weight in-app.

GetResponse tracked which actions led users to send their first email, identified the highest-performing path, and built a new onboarding flow to guide more users toward it.

The result: a 60% increase in new email creation and a 16% increase in email sends, their primary activation moment.

Every team that takes user engagement seriously eventually runs into the same question:

Should we build this ourselves, or should we buy a tool?

This section is here to help you make the decision intentionally, based on what kind of engagement work you need to do right now.

Building user engagement into your product can be the right choice, especially early on.

Teams tend to build when:

In these cases, building can be faster in the short term. Everything lives in one codebase. There’s no extra system to learn or maintain and decisions stay close to the product.

Where building works best is when control is deeply important. You can play exactly what you want, where you want it, without compromise.

Where it tends to struggle is iteration. As user engagement needs evolve, small changes often require planning, development, review, and deployment. What starts as a simple adjustment can take weeks. Over time, engagement work slows down or gets deprioritized entirely. And that impacts user satisfaction, and ongoing work.

Buying usually becomes attractive when engagement stops being a setup problem and starts being a growth problem.

Teams tend to buy when:

At this stage, the challenge isn’t knowing what to build. It’s being able to change it fast enough.

The main advantage of buying is speed. Changes can be made quickly. Experiments are easier to run. Engagement becomes a process teams can actively improve instead of something they ship once and leave alone.

The tradeoff is ownership. Tools come with constraints. Teams need discipline to avoid overusing them. Without a clear strategy, it’s easy to add more user engagement without improving outcomes.

Instead of only asking “Should we build or buy?” try starting with these questions:

If most of those are true, buying usually makes sense.

If engagement needs are simple, stable, and tightly coupled to core logic, building can be the right call.

Neither choice is permanent. Many teams start by building, then buy later when engagement becomes more strategic. Others buy early, then build custom pieces as needed.

What matters is choosing based on your constraints, not on ideology.

Consideration | Build in-house | Buy a platform |

|---|---|---|

Control over UI logic | ✅ Full control | ⚠️ Constrained by platform |

Deep workflow integration | ✅ Native by default | ⚠️ Depends on tooling |

Speed to firsts version | ⚠️ Slower | ✅ Faster |

Iteration speed | ❌ Dev-dependent | ✅ Low code |

Experimentation | ❌ Costly | ✅ Designed for it |

Personalization at scale | ⚠️ Complex | ✅ Built-in |

Measurement & analytics | ⚠️ Custom work | ✅ Included |

Long-term maintenance | ❌ High | ⚠️ Vendor dependency |

Early-stage flexibility | ✅ Strong | ⚠️ Can be overkill |

Mature product scalability | ⚠️ Hard | ✅ Designed for scale |

SignalPET helps veterinary teams monitor pets between visits using ongoing health data. Early engagement looked strong, since clinics and pet owners could complete their initial setup and see value fast. The challenge, though, came from sustaining user engagement over time.Users understood the concept. What was less clear was how their early actions translated into ongoing, repeat value.

After the first successful interaction, the experience didn’t do enough to reinforce why returning mattered. Users had value once, but the product wasn’t consistently showing how that value accumulated over time.This was a continuity problem.

The team focused on reinforcing repeat value rather than pushing re-engagement.

They:

Instead of asking users to come back, the product showed them why coming back made sense.

Engagement improved because users could see continuity. Each interaction felt connected to the last, and future value felt easier to anticipate.

Users returned because the product made progress visible over time, not from reminders.

Repeat Value plays work when they help users understand that success compounds. If users get value once but don’t come back, the experience likely isn’t doing enough to show how today’s action connects to tomorrow’s outcome.

Users were active and comfortable with the basics, but engagement plateaued. Advanced features were rarely adopted, despite the time and effort the product team had invested in them.

Feature discovery followed release timing, not user readiness. Which meant in practice, features were visible, but easy to ignore because users didn’t yet understand why they mattered. Only that there was suddenly a new thing to learn.

This issue came down to timing.

The team narrowed their focus to discovery to improve user engagement and feature adoption.

They:

Instead of asking users to come back, the product showed them why coming back made sense.

Users encountered features only when they had the context to care. That meant discovery felt helpful, not distracting, and active users had a deeper, more meaningful connection to keep them learning.

Feature Discovery works when it follows progress. Showing features too early creates noise, while showing them when they’re relevant creates meaningful user engagement.

Xometry connects buyers with manufacturers, and the core action that matters is placing an order. While users were signing up and exploring, many stalled before completing that step. They had intent, but the experience wasn’t consistently helping them move from consideration to action.

Although users engaged, this revealed an in-product follow-through problem.

Users would. move through the product fine until they hit friction and uncertainty at decision points inside the product, specifically when they would become paying customers.

The team made sure key information and reassurance were part of the experience, but it lived outside the moment when users needed it most. As a result, users hesitated, deferred action, or dropped off entirely.

Users already wanted to place orders. But the product wasn’t actively guiding them through the moments that required the confidence they needed to act.

Xometry focused on contextual guidance at high-intent moments.

They:

The messaging was tightly scoped. It showed up only when users reached a decision point and disappeared once the action was taken.

These were engaged users, and they didn't need more reminders or external prompts. What was missing was support in the moment of action. By placing guidance inside the workflow, Xometry helped users move forward without breaking focus or sending them elsewhere for answers.

User engagement improved because the product reduced hesitation at the exact point where users were already leaning in.

Contextual Guidance works when it removes friction where intent already exists. If users want to act but don’t, the most effective engagement often happens inside the workflow, at the moment of decision.

User engagement matters, and if you’ve made it this far, congratulations: you have a full understanding of the landscape you're working in.

But now it's the moment to move from ideas to confidence.

Here are the next steps teams usually take, depending on what they need most right now.

Remove adoption bottlenecks before they turn into engagement problems

Learn how teams scale digital adoption without adding friction or manual work.

→ Read how to scale digital adoption

See how real teams put these engagement plays into practice

Explore how product-led teams use Appcues to improve activation, adoption, and retention.

→ Explore customer stories

You can increase user engagement through proven strategies: provide early "aha" moments, optimize UX writing, expose users to new features through in-app guidance, automatically trigger emails based on in-app behavior, collect qualitative user feedback to find improvement opportunities, and cut under-used features to keep focus on core value. Effective user engagement strategies also include personalization, gamification, and in-app surveys to improve customer satisfaction over time.

Good user engagement means users are repeatedly getting value from your product. The definition of "active" differs between products. A social media automation tool might expect daily logins, while a budgeting tool might count monthly logins as healthy engagement. A healthy DAU/MAU ratio for B2B SaaS is typically 15–25%, and an activation rate of 25–40% within the first week is considered a solid benchmark. Good user engagement is ultimately defined by whether users are making progress toward meaningful outcomes.

Feature adoption rate, return usage, and time to value are among the most reliable user engagement measures that predict retention. When users adopt a core feature early, this predicts retention far more reliably than login frequency alone. Use an analytics tool to track these behaviors and connect them to long-term retention outcomes, and collect user feedback regularly to understand the qualitative picture behind the numbers.