How to quantify customer happiness: 9 examples of effective CES, CSAT, and NPS surveys

.png)

.png)

Are your customers happy?

This seemingly basic question can feel scary and complex. Trying to intuit your customers’ feelings about your product—and why they feel that way—can easily send you down a philosophical rabbit hole.

In reality, getting to the answer is simple. If you don’t know how happy your customers are, just ask!

Surveys like NPS, CES, and CSAT offer concrete, measurable ways to track satisfaction over time, helping you gather feedback and insights to influence both the product and customer experience.

To take full advantage of NPS, CES, and CSAT surveys, you have to think carefully about what to ask and when to ask it. While it’s important to measure customer satisfaction throughout the lifecycle, it’s especially crucial in the later stages, among what we call your regulars and champions. Soliciting feedback from these power users can shed light on improvements you can make for everyone. And regulars and champions want to give this feedback. These late-stage users are already invested in your product and want to feel like their opinions are being used to shape the roadmap.

But a poorly timed or over-the-top survey can erode customer trust and take away from the customer experience. To set you up for success, let’s dig deeper into these 3 survey types and look at 9 examples of each done right.

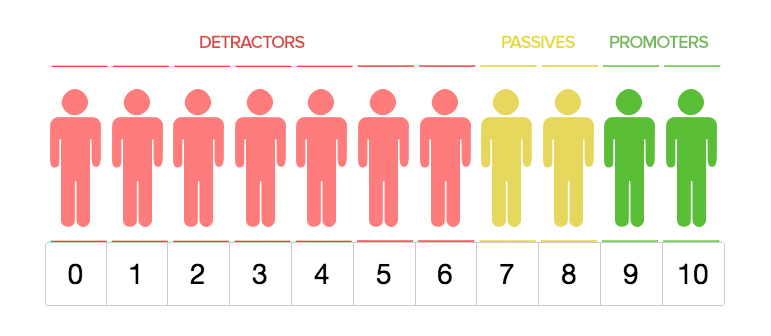

NPS is a single-question survey that measures customer satisfaction and collects feedback. Users are all asked the same question: On a scale of 1 to 10, how likely are you to recommend our product/service/brand?

Based on their NPS responses, customers are split into 3 groups.

It’s important to be thoughtful about when and how you use NPS. While you do want to send an NPS survey at multiple stages of the lifecycle, avoid showing it too early in the user experience. Customers need a chance to use your product and see its value before knowing if they would recommend it to a friend.

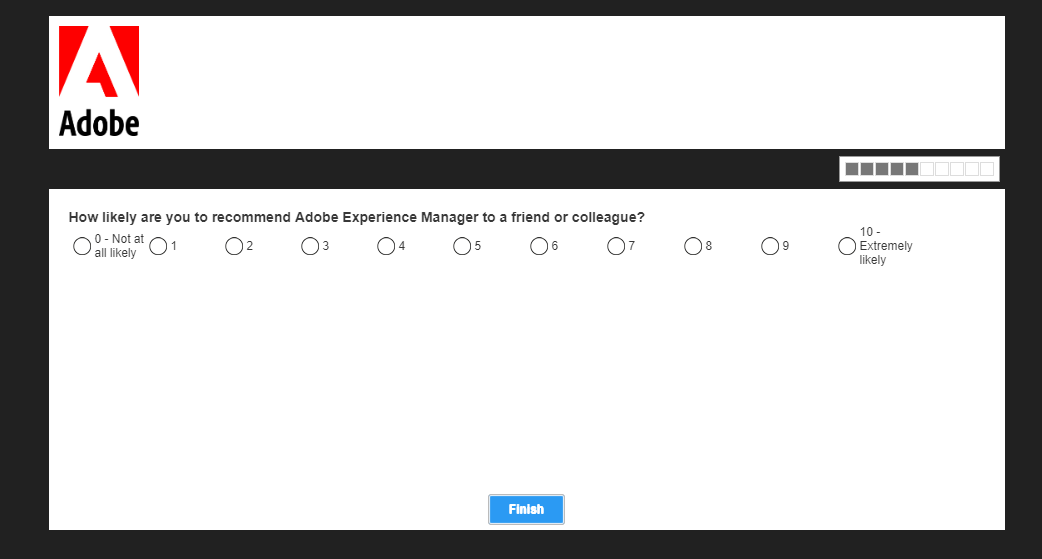

Adobe uses NPS surveys at key points in the user lifecycle to measure satisfaction, particularly focusing on regular and champion users.

The survey itself follows the classic structure and presentation, but it is asked at just the right time. In this case, the in-app window appeared for the first time after using Adobe Experience Manager consistently for more than 3 months. This ensures that Adobe targets users who have spent significant time in the tool, making them qualified to answer the question.

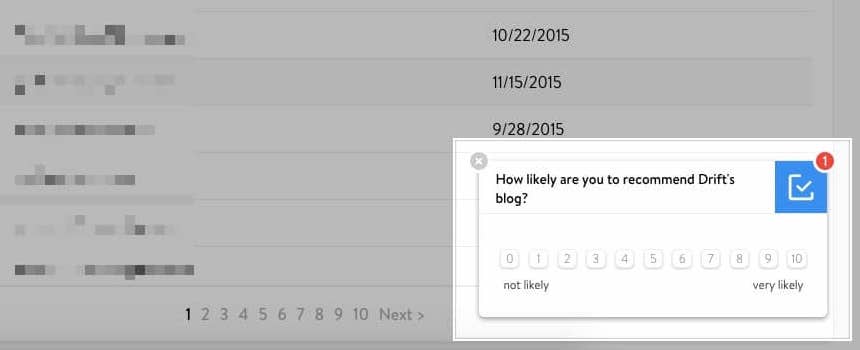

NPS surveys are traditionally focused on the product as a whole, but they can also be used to measure happiness with things like marketing or customer service. Drift, for example, uses NPS to gauge satisfaction for their blog.

Drift presents the NPS survey in a subtle, unobtrusive way. The modal appears in the bottom corner of the screen, allowing users to finish what they were doing before addressing the message. With non-urgent communication like this, choose a similarly non-disruptive UI pattern that doesn’t overpower the user experience.

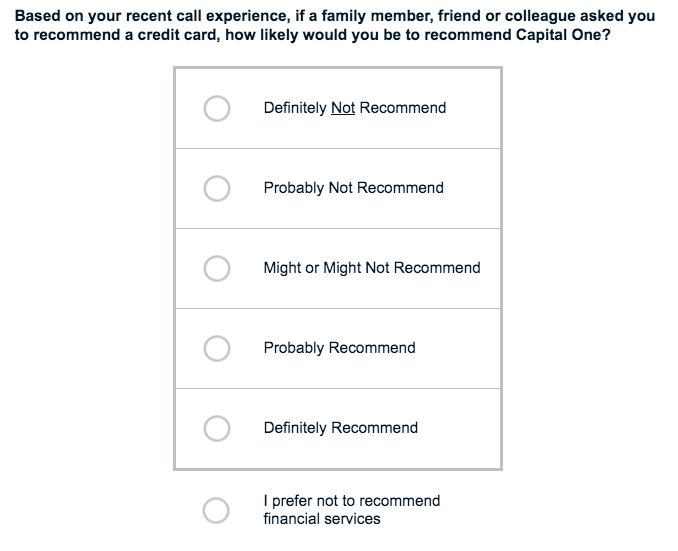

Capital One takes a unique approach to their NPS survey. First of all, they don’t ask users to select an answer on the classic 1 to 10 scale. Instead, they ask users to choose from options that range from “definitely not recommend” to “definitely recommend.”

Secondly, they add an answer that you don’t usually see on NPS surveys: “I prefer not to recommend financial services.” This is a smart move, both for survey accuracy and maintaining customer trust. Some users may not feel comfortable recommending any financial service—after all, choosing a credit card can be a personal and sensitive decision. Rather than having these users skew their survey data by selecting “Definitely not recommend,” Capital One offers an alternative.

CES surveys ask customers to gauge the ease of their experience. On a scale of “very easy” to “very difficult,” users are asked how easy it was to interact with your company or to complete a specific task in your product.

This metric is simple to track over time, and gives you a strong sense of customer loyalty. After all, customers are more likely to continue using your product if it’s easy to do so. A challenging or frustrating experience—whether sustained over time or a one-off instance—can prompt many customers to start looking for another solution.

But CES surveys on’t capture the full picture. For example, a regular user could be very satisfied with your product, but have a bad interaction one time. Depending on when you asked the question, their response could vary wildly. For a more accurate understanding of customer sentiment, use CES in combination with NPS or longer-form surveys to understand customer satisfaction and loyalty together.

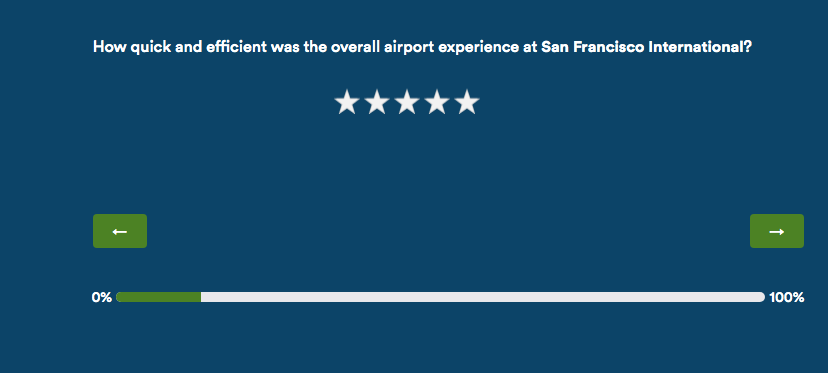

Alaska Airlines regularly surveys recent passengers, asking them a variety of questions about their flight. One question is dedicated to CES and asks customers to rate how quick and efficient their airport experience was, on a scale of 1 to 5.

Alaska Airlines could have used more standard language, asking “How easy was your overall experience at San Francisco International?” Instead, they choose specific adjectives to get the data they’re looking for. In this case, “quick” and “efficient” are factors that Alaska Airlines can more easily control for than general ease of airport experience.

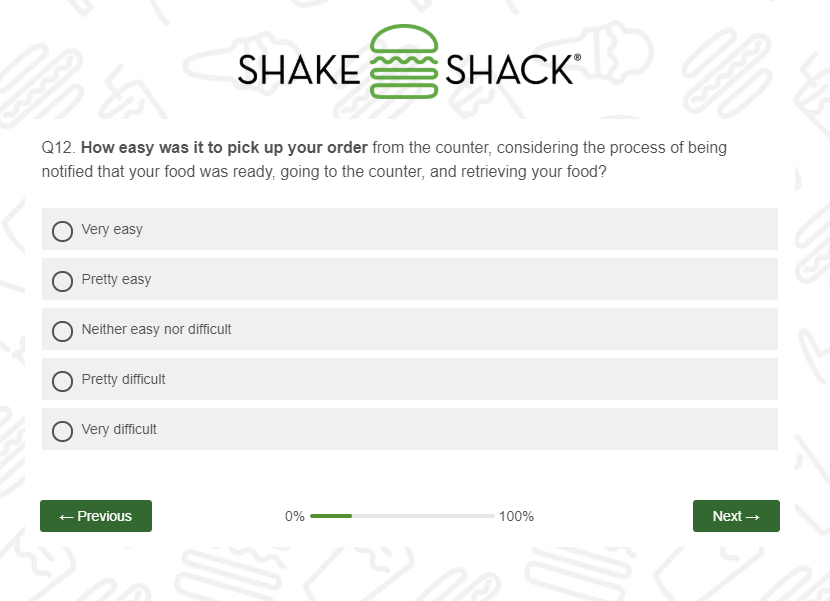

Shake Shack also embeds a CES question within a larger survey. And, like the Alaska Airlines example, they are very prescriptive in their copy to make sure customers evaluate the right factors.

Here, Shake Shack specifically references the process of being notified of their order, going to the counter, and retrieving food. Customers can rate the experience from “very easy” to “very difficult.”

It’s worth noting that Shake Shake has taken the time and effort to make this survey feel like a natural part of the wider user experience. The survey matches Shake Shake’s branding, right down to the color of the progress bar. Visual details like these can go a long way toward making a survey feel more personal and fun.

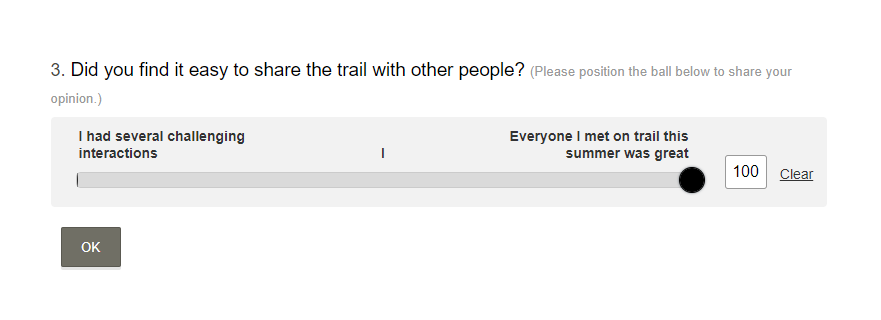

Washington Trails Association (WTA) shares hiking guides, trip reports, and volunteer opportunities to protect the outdoors. Their summer survey focuses on hikers’ experience on the trails, asking CES questions about how easy it was to share the trail with other visitors.

Rather than offer a static numbers as response options, WTA asks hikers to drag a ball icon along a sliding scale of 0 to 100 for more flexibility in answering. And, because assigning a number to a hiking trip can be challenging, they add additional context with a short description on either end of the range.

A CSAT survey typically consists of a single question with responses captured on scale made up of numbers or faces at different ends of the emotional spectrum. The goal is to capture how happy or unhappy customers are with a specific experience or interaction.

While NPS and CSAT surveys may seem similar, CSAT is designed to provide feedback on a specific element or interaction while NPS assesses overall happiness.

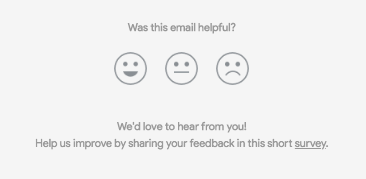

Surveys don’t always need to appear inside your product. To capture user attention, try embedding a CSAT survey in unexpected areas, like in account settings or an email footer.

Nest leverages the often ignored blank space at the bottom of an email to measure customer satisfaction with their marketing. They ask users to rate the email by clicking on one of three smiley face icons. Importantly, Nest doesn’t make users click and open a new window to start the survey; instead, people can click directly in the email to share their response.

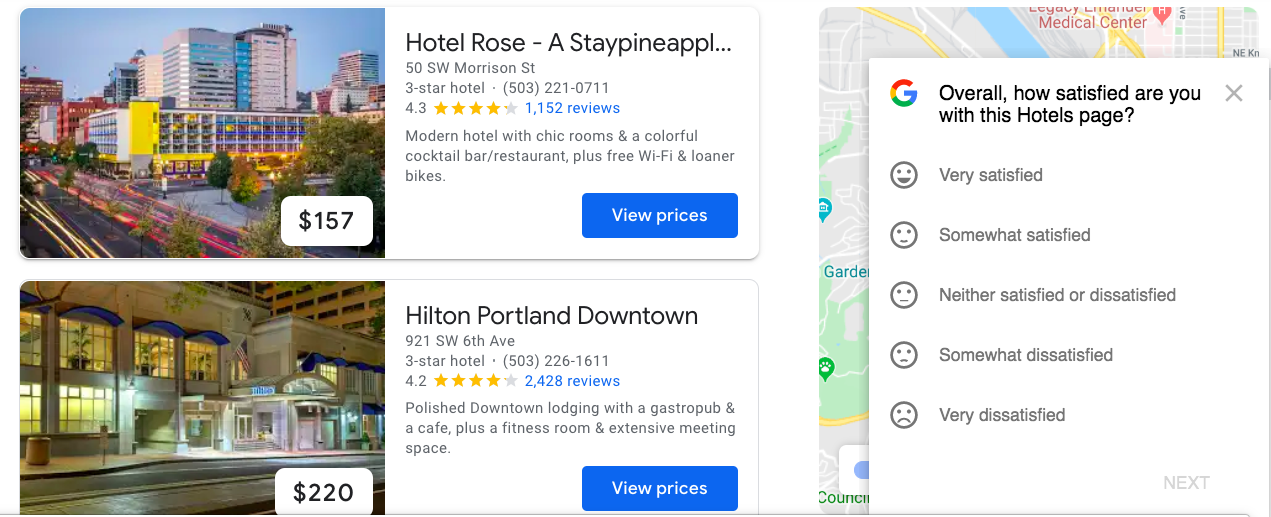

While email is a great way to engage users outside your app, the best way to get contextual feedback is from surveys that are asked when users are performing the exact task you want feedback on. For example, Google asks visitors to rate how satisfied they are with the Google Hotels page when they are in the process of searching for and booking lodging.

Crucially, the modal is carefully placed so it doesn’t hide the most important information on the page: the hotel listings and pricing. Obscuring a user’s workflow to ask for feedback is an easy way to lower your score!

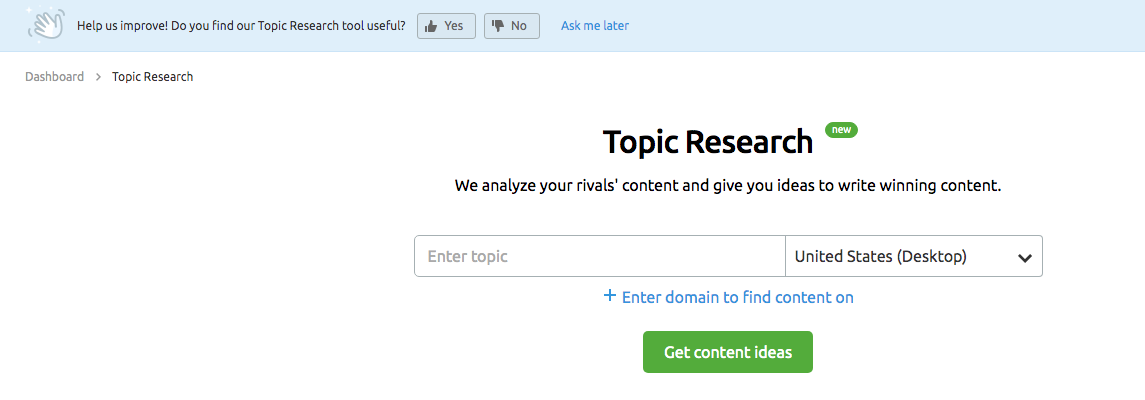

SEMrush, an SEO tool, uses CSAT to complement new feature releases. When users land on the Topic Research section of the dashboard, they see a banner asking if they find the new tool useful. They have the option to answer yes or no, and also to answer the question at a later date once they have had more time to play around with the feature.

CSAT surveys alone shouldn’t be used to measure the success of new features. However, when combined with behavioral and product analytics, they can be an excellent way to capture the voice of the customer.

Surveying your customers is one of the fastest, most efficient ways to pinpoint how they’re feeling and understand what is and isn’t working. Just remember: Survey results shouldn’t live in a vacuum. The feedback you gather should go hand-in-hand with in-app behavioral analytics, so you can understand the full picture of your customer experience.

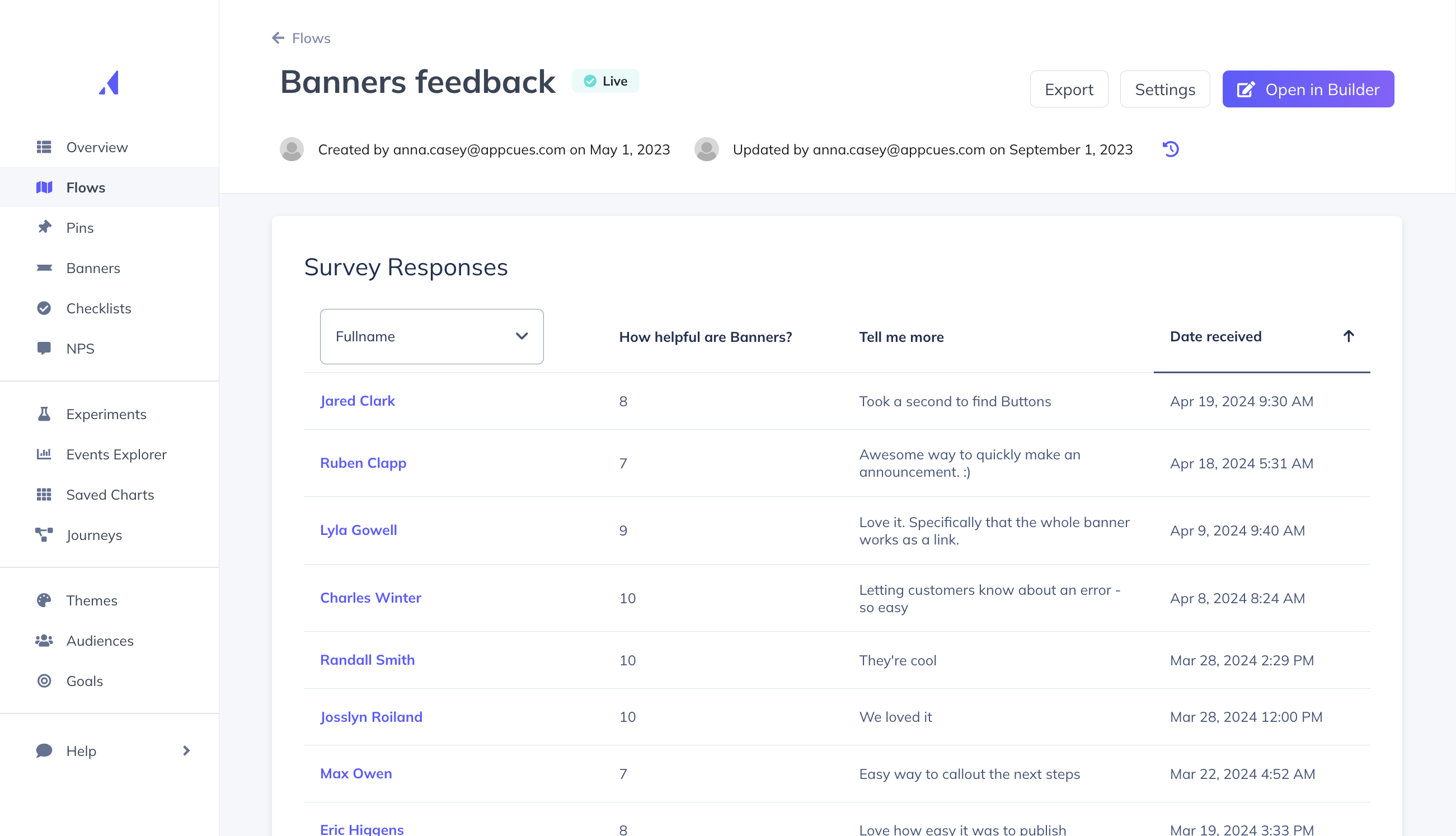

When you use a tool like Appcues, you can see your survey responses right in-app—right where you can take action on them.

You can use Appcues to design and target NPS surveys and feedback forms to the right user segments (no coding required). Try Appcues for free to get started.

Already a customer? We have plenty of resources to help you easily create your own NPS and customer feedback forms.