Don't fail fast, fail thoughtfully: How to approach product design failures

.png)

.png)

To fail is to be unsuccessful in achieving one's goal, and to be fast is to move at high speed.

For a business to be rapidly unsuccessful seems illogical, yet this fail-fast philosophy is a prevalent and idolized approach in the startup and the SaaS world today. But why?

In principle it makes sense. Bypassing cumbersome corporate processes lets you try lots of ideas to see what works, which leads to faster incremental improvement. But the problem is that people take the fail-fast approach at face value. It gets carelessly thrown around in meetings, hung up on the walls, and used in hindsight when things go wrong to soften the blow. This carelessness inevitably leads to more failure, which defeats the point.

Minimizing failure and maximizing success is ultimately what you want, but this will happen only when you take the time to approach the fail-fast philosophy strategically and thoughtfully. When you understand why failing fast is dangerous—and how you can fail more thoughtfully—failure will become a key driver of success.

So let's take a look.

Failure will always enjoy a natural advantage. With 90% of startups failing, it seems that wrong answers to any problem outnumber the right ones by a wide margin.

But this does not mean failure is something you should simply accept. Too much failure can be dangerous: It can damage relationships with your users, tarnish your brand, and ultimately hurt the bottom line. But this is what you risk when you fail fast, for a number of reasons.

The fail-fast approach can make teams nonchalant about failure. When failure is not feared, it can have a direct impact on the motivation to produce high-quality work and to execute precise experiments.

The reason is that actions are motivated by the rewards—positive motivation—and by the avoidance of adversities—negative motivation. When there are no consequences of a failed idea, the motivation to produce quality prototypes and run accurate experiments can deteriorate, reducing your chances of finding success. Over time, teams will throw ideas against the wall to see what sticks. But this scattergun approach can affect more than just quality.

When you try lots of things at the same time, you risk two things. First, you act on overlapping ideas, risking skewed results by another idea that you have not accounted for. Second, you run the risk of being tricked by a false positive—a result that wrongly indicates an idea was successful.

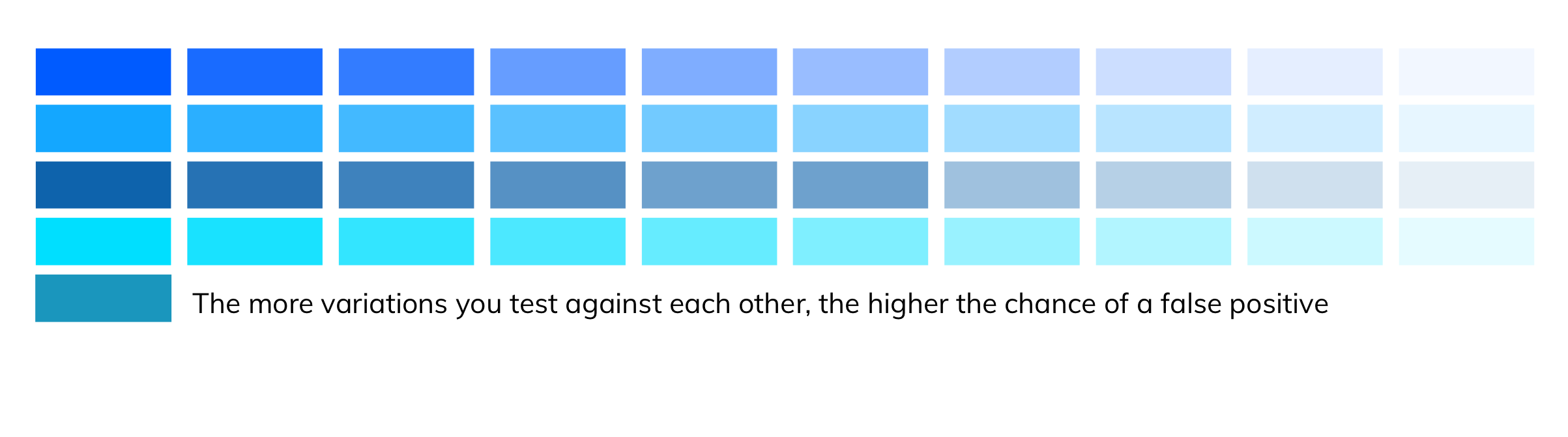

Here's an example to explain this: Google wanted to find out what shade of blue was best for their search links—so they tested 41 of them! But—statistically speaking—if they wanted to be 95% confident about the result, they had an 88% chance of getting a false positive—an incorrect result. If they tested 10 ideas at once, the chances of a false positive were still high at 40%. That shows that when you throw lots of ideas at the wall, you risk implementing an idea that you think is positive, but could actually be harmful.

The point here is to not be flippant with failure. Instead, it is best to perform a small number of focused and well-grounded experiments to give your ideas the attention they deserve and to increase the chances of seeing an actual success instead of a fake one.

The fail-fast approach is analogous to our throwaway society, wherein something that does not offer immediate worth can be disposed of, and we try something new without a second thought. But like many successful businesses and products, it takes time to prove ideas.

Ideas need patience and persistence to discover their real potential; otherwise, you will end up throwing value and key learnings in the trash. Ideas need time in the spotlight so that as many users as possible can experience them. This is important because, when trialing ideas, sample size is king. The more exposure your idea gets, the more accurate your results will be, because of a statistical reason.

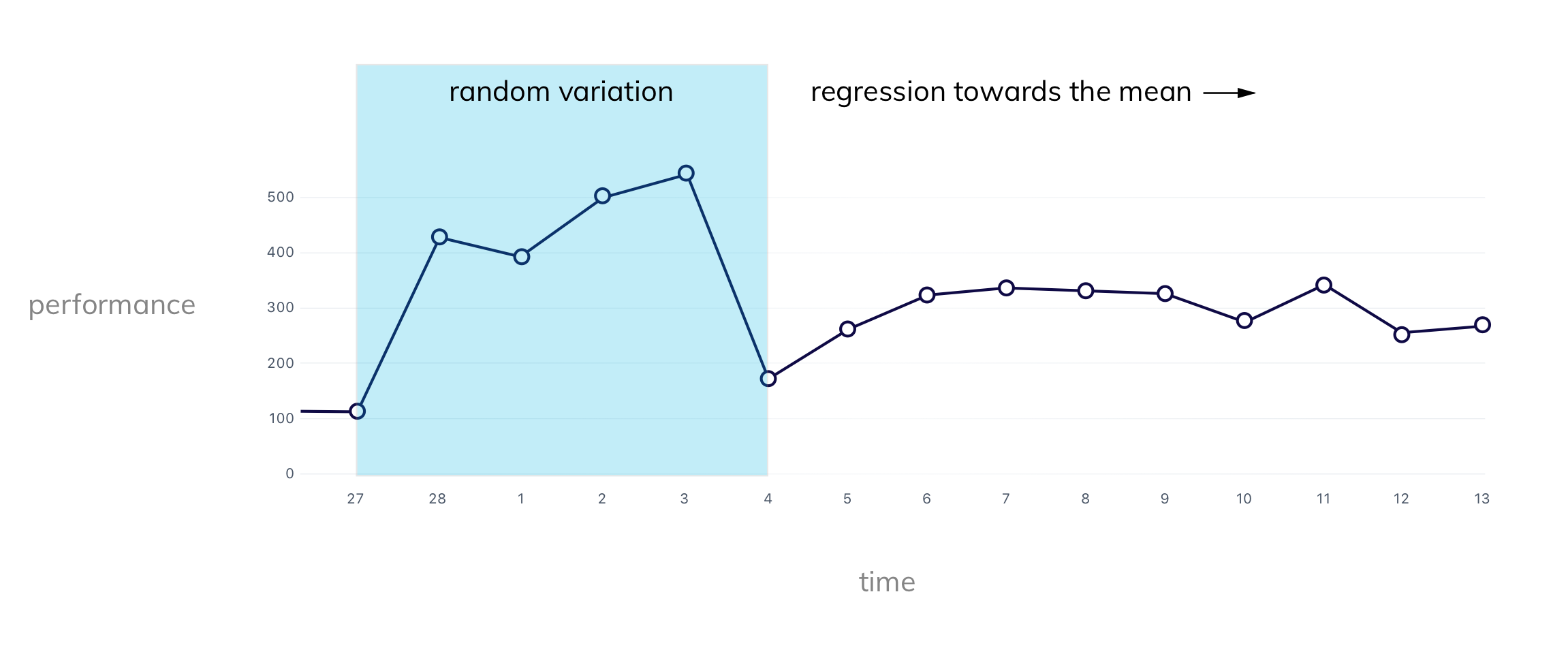

The regression toward the mean in statistics means that in any event where luck is involved (e.g., your ideas), extreme outcomes (positive or negative) are followed by more moderate ones. In other words, the random variables that will influence your ideas will even out the more time you give it. More time gives you a more accurate understanding of your ideas' performance. This is what you want.

A substantial negative or positive impact after launching your idea is likely a result of chance. It might be because your idea is novel—something that is likely to wear off—or because your users need to come to grips with a new function before the real value is delivered.

Either way, these are things to keep in mind when you are tempted to declare your idea a roaring success or a dramatic failure soon after launch. The key point is that you need to be persistent and patient to get the full story of what your ideas are doing for your product.

To be successful you need to move at speed, but anything that moves too fast can become difficult to manage. If you move too quickly to learn from your failures, then a failure is just a failure—and you are in for a tough time.

Instead of thinking in terms of failing fast, it is better to think in terms of launch and learn.

Learning is the decisive point that needs to be given more attention, so much so that teams should nail “learn fast” to their meeting walls, not “fail fast”.

If you don't validate your failures with data, then your learnings are entirely subjective. And humans are notoriously biased when it comes to dealing with failure, which can exacerbate the problem.

There's motivation blindness: the tendency to overlook things that work against our ideas because of our investment in them; the bystander effect: when we see a failure, we are less likely to do something to fix it if others see the problem too; and pluralistic ignorance: we assume nothing is wrong because no one else is concerned.

To avoid these biases surfacing in the team, you need to stick to a process that ensures your team is learning from your failure, objectively.

At Google, this takes the shape of a postmortem, during which the team reflects on the most undesirable events of a project. The team discusses what happened, why it happened, how it affected the project, and what can be done to improve in the future. The postmortems are then circulated in an internal monthly newsletter to keep everyone in the loop. This way, the failure becomes a companywide fact to ensure that similar failures don't occur in the future.

One thing user-centered design, agile development, and the lean startup framework all adhere to is scientific methodology, which is worth remembering.

Scientists design experiments to answer questions and to provide a path forward, regardless of the outcome. Scientists fail only when their test is invalid and they are unable to learn anything from the result.

For the scientific method to work, an idea must be prototyped carefully for it to accurately test the underlying assumption. The methodology must be suitable for validity, and the answers must be analyzed thoroughly in order to confidently progress forward in the right direction.

When you are thoughtful with your ideas, you can turn any failure into a success.