The complete guide to thriving in your first 30 days in a growth role

.png)

.png)

Congratulations!

You’ve just accepted a growth marketing role at a new company.

First—celebrate! Call your parents and tell them the good news while casually bringing up that you’re the more successful sibling. Listen to “Don’t Stop Believin’” by Journey while looking out a window. Throw yourself a surprise party!

Then, turn all of your focus towards how you’re going to thrive in your new job.

Whether you’re a first time growth professional or an industry veteran, the role won’t be easy. There will be a million places where you’ll want to direct your attention and limited resources. You’ll also likely be expected to immediately make an impact and move the needle.

While it's easy to feel overwhelmed, success throughout the role stems from starting strong and building a sturdy framework. To get the foundation needed to make the most of your first 30 days in a growth role, read on.

The first week is all about research and preparation. We’ll guide you through creating a reading list, auditing and organizing existing growth assets, and figuring out which tools you should be using.

With the field of growth marketing moving so rapidly, you need to stay on top of the newest tips, tricks, and strategies. The best growth marketers do this by constantly reading articles and blog posts from other marketers.

When putting together your growth reading list, I recommend using a tool like Feedly, which allows you to plug in your favorite growth influencers and receive a feed of everything they publish.

Make sure you read work from a variety of growth and marketing professionals, who cover a wide range of topics including CRO (Conversion Rate Optimization), SEO, Content, Analytics, and product marketing.

If you don’t want to take the time to compile your own list, download our master list of growth blogs to follow.

Next, audit the existing growth and marketing assets that your company has. These could include excel docs of experiments, presentations on brand identity, competitor keyword analysis, and previous versions of the website. Organize these assets into a growth folder for easy access.

Finally, figure out the tools that you’ll be expected to use in this role. Most of your tech stack will be already used cross-company.

At Appcues, we use Slack for cross company messaging. For analytics, we use Amplitude, Google Analytics, and Hubspot. For our CMS and website, we use Webflow. For email, we use Hubspot and Customer.io. For our CRM tool, we use Hubspot.

Make sure you get logins for all of your tools, and start familiarizing yourself in the ones you’re less than comfortable in.

Start investigating what testing tool you’ll use to conduct experiments. Many companies use their Optimizely or VWO.

While both great options, we use VWO for the price point, the data visualization, and the easy editor. If price is not a concern, try demos of both and decide which will make it easier to quickly spin up experiments.

Your second week should be all about getting comfortable with your metrics and increasing understanding of your customer.

First, find out what your North Star Metric is. Traditionally, this is thought of as the metric that best shows the value you’re delivering to your customer.

For AirBnB, their North Star Metric is nights booked. For me, my North Star Metric is higher up the funnel, as that’s where I tend to operate the most. The metric I focus on the most is MQLs–which for Appcues is anyone who requests a demo or signs up for a trial.

This might seem like overly technical for your second week, but it’s important to understand early on what success will look like to you.

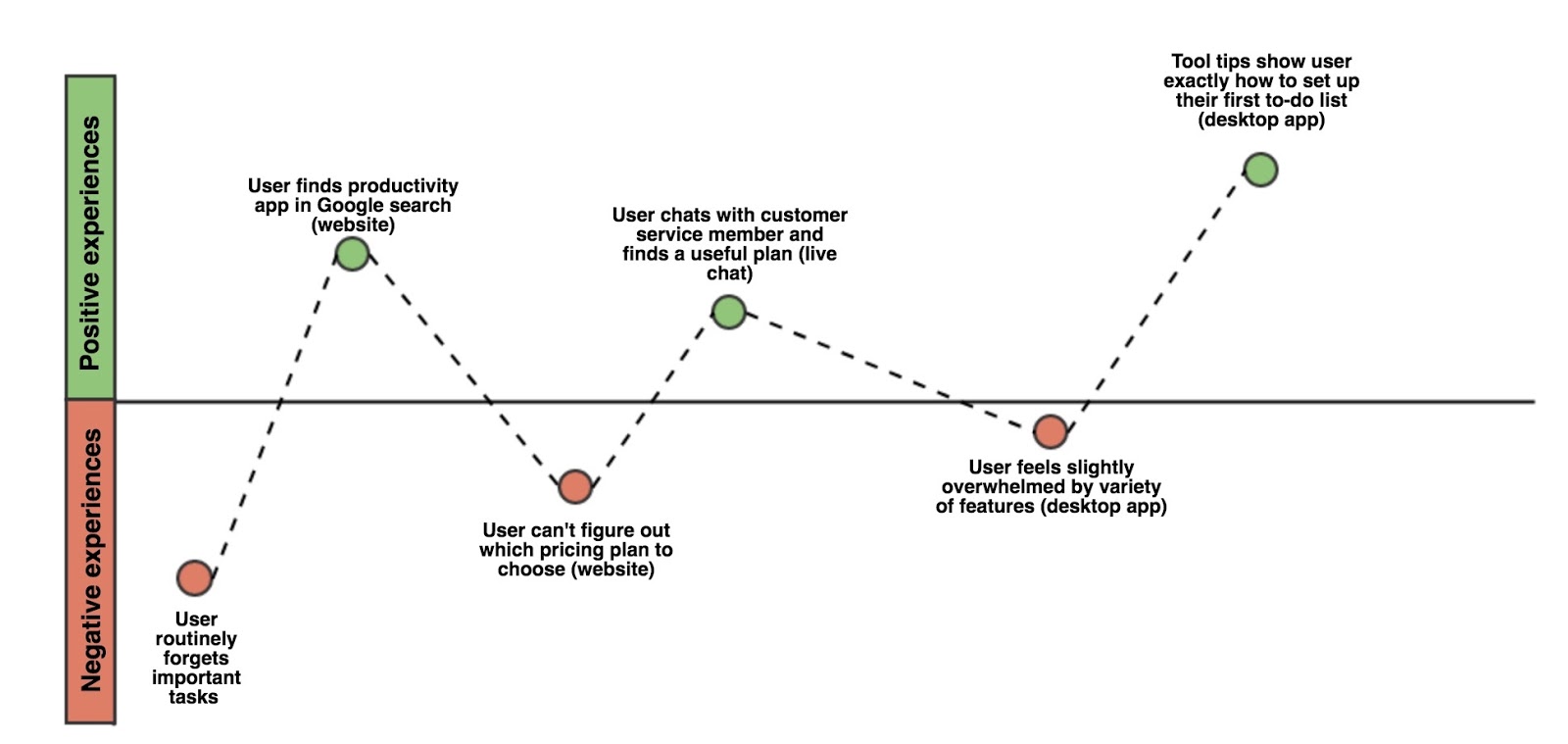

Next, you need to understand on a deep level how people go from never having heard of your brand, to becoming a power user. This starts with mapping the user journey. Your first draft doesn’t need numbers—just write down what happens to your user persona.

For example, at Appcues the users we focus on are product managers at growth stage companies. They often first hear about Appcues from organic search, where they may type in “User Onboarding tips”, for example. They then find this blog, read our articles, and make their way to our main homepage. After they understand how Appcues works and where the value is, they click through to our trial signup page. From there, they put in their information and are brought into the product. They then remain in trial for a period of time unless they start paying of Appcues.

Map out this exact journey for your product and persona. Even if you’re only focusing on a certain stage of the journey—like acquisition—it is important to develop an early understanding of the full customer experience.

After you’ve mapped out the journey, it’s time to create a funnel analysis. This is similar to your journey map but now brings in metrics. Dive into your analytics tools to figure out how many users hit each step. Then, calculate the conversion rate across steps. This way, you will be able to figure out where you are losing potential customers.

For example, you may see that last month 10,000 people visited your blog, and 5,000 of those then went on to visit your homepage. That’s a 50% conversion rate from step 1 to step 2. However, out of those 5,000 website visitors, only 800 requested a trial. That’s only a 16% conversion rate from step 2 to step 3 ( (800/500) * 100 ). If you boost this percentage even mildly–say from 16% to 18%, then you would gain an extra 100 signups. That’s a 12.5% increase! There seems to be a lot of opportunity here to optimize your website and bring up that conversion rate.

Your third week is about setting up your experimentation framework by organizing a growth meeting and creating a backlog of experimentation ideas.

Growth doesn’t work in isolation. To succeed in this role, you’re going to need to create an atmosphere of growth and ideation—where people all across the company feel comfortable tossing you ideas and potential experiments.

Plan your first growth meeting. While later meetings should focus on evaluating the impact of experiments and going over learnings, this first meeting should be a brainstorm so you will immediately have dozens of experiments in your backlog.

When it comes to who to invite to this brainstorm, I recommend:

The idea is to keep the meeting small to ensure it stays organized, but fill it with people who see things differently to spark conversation and diverse ideas.

Your main role should be to facilitate the discussion. Everyone here has more experience at the company then you (at this point). Get them talking while you whiteboard all the ideas discussed. If it helps, organize the ideas by where they fall in the funnel: Acquisition, Activation, Revenue, Referral, Retention.

For your fourth week, it’s time to put everything you’ve learned together and launch your first experiment.

Before you send anything live, you have to figure out what experiment you’re going to ship. Look over the idea backlog that you put together based on the ideas generated in the brainstorm.

For easy prioritizing, you’ll want to rank each idea based on three criteria: Impact, Confidence, and Ease. The average score of all three is known as the ICE score.

Impact refers to how large an effect on the business you believe the experiment will have. Like all criteria in ICE, you will assign it a number from 1-10, with 1 being minimum impact on the business and 10 being maximum impact on the business.

For example, an A/B test on the subheader of a low-traffic page will likely have minimum impact on your North Star Metric, so you would probably give it a 2 or 3. On the other hand, if you redesigned your entire website, that could have a substantial effect on the business and might deserve a 8 or 9 impact score. Throughout ICE, consistency is more important that the scores themselves, as they will be used to prioritize ideas compared to other ideas.

Confidence refers to how sure your are that the experiment will have the result that you predicted. If you are almost 100% sure that redesigning the site with make MQLs skyrocket, give it a high score. If you have no idea if your hypothesis will be true, it’s best to keep this number low.

Ease refers to how easy this experiment will be to implement. Using the example above, a full redesign will likely require a good amount of time, resources, and convincing other team members this is the right course of action. Not so easy. On the other hand, a subheader a/b test can be sprung up in minutes, giving it a high ease score.

To calculate your total ICE score for each idea, average together the idea’s impact, confidence, and ease scores. For the subheader a/b test example, this might be: Impact= 2, Confidence= 6, Ease= 8. That gives it an average ICE score of 5.33. For the redesign example, this might be Impact= 8, Confidence= 8, Ease= 3. That gives it an average ICE score of 6.33. This would tell you that you should likely focus first on the redesign experiment, as it has a much higher overall ICE score.

Rank each of the ideas from your brainstorm using this criteria, and it should become much more apparent where to focus your time first.

But first, a bit on testing philosophy and methodology: The goal of any good growth marketer is to solve problems.

You can solve any problem in two fundamental ways:

Growth marketing follows way #2. By running multiple experiments a week and allowing yourself to be wrong a lot of the time, you will end up improving your North Star metric at hyperspeed.

The two types of experiments that I typically launch are A/B tests and split URL tests. The main difference between the two is how I set them up.

A/B tests are usually done to evaluate a single simple element on a web page–most often a piece of copy. For example, using VWO’s editor I might create a variation of Appcues’ homepage that has a different headline. It is important to only change one thing when possible during an A/B test, so you are easily able to attribute any difference between variations to a precise change you made.

The second experiment I run, a split URL test, requires a bit more preparation. This is done when I want to make larger changes that exceed the realm of what VWO’s editor is capable of—often larger redesigns.

In these instances, I create a copy of the page I want to test using Webflow, the website builder that Appcues uses. I then change the new page, still trying to take care to only change things that are under the umbrella of the same hypothesis. For example, If I made every button blue, this is changing more then one variable at once but all the changes fall under the same hypothesis—that blue CTAs get clicked more than red. If instead I changed both CTA color and the size of the hero image in the same split-URL test, then there would be no way of knowing the role that each change played in the results that I then collect.

After the duplicate page has been changed to reflect the new test, I use VWO’s Split URL feature to randomly take 50% of traffic that was going towards the old URL and redirect it to the new one. In VWO, I set up the goals to be tracked from the experiment. The main goals I usually would track from a homepage test would be visits to the registration page, and how many people filled out the form on the registration page to become an MQL. As secondary goals, I usually have VWO track visits from the homepage to our other main pages (product, pricing, etc) to get a better understanding on how experiments change the way my visitors navigate the site.

Experimentation tools often suggest that you run the experiment until they deem them statistically significant. However, this can often take impossible amounts of time if your websites traffic numbers aren’t massive. (We get ~10,000 monthly visitors to our homepage. VWO suggests that I run most tests for around 150 weeks.) If you’re trying to move quickly, you’re going to need to change these parameters a bit. I’ve found that Kissmetrics' A/B Significance Test tool has a lower threshold of traffic required and can often tell you if your test is stat sig after just a couple weeks.

While it is important to make sure your results are as accurate as possible, as you run more experiments, you will build your own criteria to evaluate when one is successful. For me, if I’m running an experiment that has gotten decent traffic and one variable has consistently outperformed the other over a period of 2 weeks or more, then I will often consider it successful.

While your first 30 days will set up the foundation of your future success, the best is yet to come.

In the months following, you will hone your experimentation strategy so that you’re comfortable launching multiple tests a week. You will get your first win—and more importantly—get your first big piece of learning that will inform future experiments. You will have days where you feel hopeless that nothing you do will move the needle and other days where every experiment seems to land perfectly.

It’s all part of a young field that’s changing everyday—change that you are now helping to drive. So learn these fundamentals well, and then break them however you see fit.