The rise of the privacy-conscious consumer

.png)

.png)

[Editor's note: We're so excited to share this article from Jenny Wagner—originally published on her blog.]

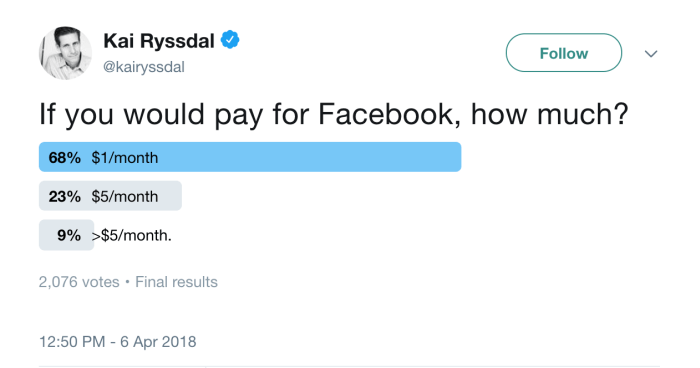

When Elon Musk hopped on the #deleteFacebook bandwagon, it became clear that public perception of internet privacy is changing in light of Facebook’s recent issues with Cambridge Analytica.

As a researcher on attitudes toward digital privacy and an advocate for user-friendly privacy practices, I’m excited by these developments. I’ve been following the conversation and have started seeing evidence of a long-term shift in consumer attitudes towards increased security and control. Welcome to the era of the privacy-conscious consumer.

Reporting has focused on social media, but I doubt it will stay in that category. What makes the information Facebook collects on you different from the information Yelp gathers when you submit a review or all the information Amazon has on each of its customers? The time of reckoning has come, and the era of indiscriminate data collection is ending.

Whether you work for a social media company or any other digital organization, it’s time to get ahead of the conversation on digital privacy by doing more to protect your customers.

An individual’s feelings about sharing any information with a company at a given moment in time will always fall along two axes: how much they value the right to anonymity (versus the importance of transparency); and how much they trust the company’s ability and willingness to keep their data private. I developed this framework through a wide number of interviews with individuals, consultations with privacy experts, and combing through a wide amount of secondary research.

On the opposite end from anonymity lies the desire for transparency. While many people see the power that transparency and openness can have, they believe in the right to protect themselves and others through privacy. They see the online abuse of marginalized groups and the damage implicit bias does in hiring as good examples of why anonymity matters. Of course, whether you value transparency or anonymity can vary from topic to topic: some people are happy to talk in great detail about their professional lives, but don’t want to share information about their families. Others will share data in text format—for example, sharing information on where they’re traveling—but refuse to share photographic data, such as photos from that vacation.

The other axis has to do with the consumer’s level of trust towards that company. This is driven by two questions: Does this company have my best interests at heart? and Does this company have the ability to keep my data safe?

In terms of best interests, this has to do with the perceived motives of the company. The biggest impact of the Cambridge Analytica revelations has been that people no longer believe Facebook is dedicated to its mission of giving “people the power to build community and bring the world closer together.” More users believe they are motivated by profit, rather than by idealism. Conversations have shifted from “Facebook allows me to share what I want and pays for that by advertising to me” to “Facebook sells my personal data.”

The other side of trust has to do with the company’s technical capabilities: can the company protect my data against the bad actors across the internet who want to steal it? Equifax’s recent data breach is a perfect example of this. While some might have trusted them, the breach demonstrated their inability to keep user data safe. Consumers are hesitant to share a credit card number, location, or anything else if they don’t trust the company’s information security.

No individual is forever fixed on either axis. My research has shown that people often start in the upper-right quadrant as “frequent sharers.” As they become more technically savvy or learn more about the risks of data sharing, they drift down and to the left, becoming more cautious and private.

People are beginning to understand how easy it is for their data to be shared without their knowledge or consent. Many are asking questions about how to use technology to protect themselves. As individuals learn more about psychographics and the spread of fake news, they have a greater desire for privacy and control. In combination, this technical sophistication and increased level of concern will lead to fewer people wanting to share information of any type.

Many companies’ business models rely on some form of data sharing—whether through placing advertisements or installing cookies to help provide a more seamless browsing experience. As we see more privacy-conscious consumers, these companies’ business models will be challenged.

Consumers will not change how they feel about privacy and anonymity based on your app—that’s about their own personal attitudes and beliefs. But they will change how they feel about sharing with different companies. The most important thing companies can do is get ahead of the shift by being trustworthy. This includes supporting a privacy bill of rights. Europe’s GDPR privacy law forces companies to take the first step towards this, and it is probably only a matter of time before similar regulation comes to the US.

Designing products with built-in privacy isn’t only about regulation; it also happens to be the right thing to do. It establishes greater trust because it allows you to take the moral high ground and demonstrate that you are actually trustworthy: you are giving your customers control of their own data and holding yourself accountable to taking care of them. Most people’s attitudes towards privacy and transparency are fixed, but how they feel about you as a company is under your control.